Compositional Distributional Models of Meaning

Where natural language semantics meets quantum information flow...

The problem of representing natural language meaning in a computationally tractable way has been approached in various and often mutually incompatible ways. Two such orthogonal classes of semantic representation are distributional and symbolic models of meaning. Distributional models of meaning exploit the co-occurrence of other terms with the term being modelled to determine its semantic content, applying Firth's well-known dictum that "You shall know a word by the company it keeps"; while the symbolic approach usually exploits grammatical features of sentences to model relations between entities in the world, thereby expressing the truth conditions of sentences.

These approaches seem theoretically orthogonal, and hence incompatible in implementation. Distributional models of meaning are quantitative and express the semantic relation between terms but offering no immediately obvious way of modelling the contribution of sentence structure to meaning; while typically the semantics of individual words in qualitative symbolic approaches is left "mysterious" and ill-defined.

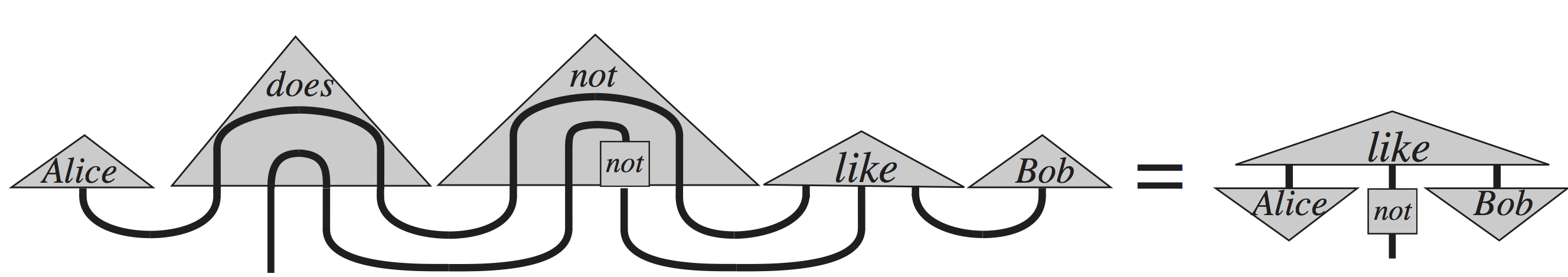

This project seeks to reconcile these apparently orthogonal representations, guided by the intuition that lexical co-relation and grammatical roles both correspond to different ways of "knowing how to use" language, thus both aspects provide relevant information when we understand the meaning of an expression, and hence they are viable components for a new representation modelling natural language semantics. Category theoretic methods from quantum information theory provide the building blocks for a general framework in which to combine symbolic and distributional approaches to expressing and comparing the meaning of sentences.

In bringing together expertise from the fields of formal logic, philosophy of language, computational linguistics, pure mathematics and theoretical physics, this project aims to produce a new model of natural language semantics for use in the development of more sophisticated text processing applications, document retrieval systems, intelligent agents, etc.

Project Links

This research is currently supported by the EPSRC (grant EP/I03808X/1) as part of a

multi-site grant (Cambridge, Edinburgh,

Oxford, Sussex,

York) for the project A Unified Model of

Compositional and Distributional Semantics: Theory and Applications (Principle Investigators: Profs Bob

Coecke and Stephen Pulman).

This research is currently supported by the EPSRC (grant EP/I03808X/1) as part of a

multi-site grant (Cambridge, Edinburgh,

Oxford, Sussex,

York) for the project A Unified Model of

Compositional and Distributional Semantics: Theory and Applications (Principle Investigators: Profs Bob

Coecke and Stephen Pulman).

A public mailing list, disco-news@maillist.ox.ac.uk, has been created for interested parties wanting to receive updates about the project regarding publications, workshops, conference talks and other matters. This mailing list can be joined by emailing disco-news-subscribe@maillist.ox.ac.uk.

An internal mailing list exists for local activity participants. To be added please contact Martha Lewis.

A list of relevant upcoming conferences and workshops, as well as publication venues, is maintained here.

External Collaborators

Past Events

- QI 2008

- Flowin'Cat 2010

- IWCS 2011

- QI 2011

- GEMS 2011

- EMNLP 2011

- Coling 2012

- IWCS 2013

- CoNLL 2013

- EMNLP 2013

- Grefenstette and Sadrzadeh Compositional Distributional Model Evaluation Dataset, EMNLP 2011

- Grefenstette and Sadrzadeh Compositional Distributional Model Evaluation Dataset, adjective-noun based transitive sentences, 2012

- Kartsaklis, Sadrzadeh and Pulman Term-Definition Dataset, COLING 2012

- Disambiguation Dataset used in Kartsaklis et al. CoNLL 2013. This previously unpublished dataset was produced by Edward Grefenstette and Mehrnoosh Sadrzadeh.

- Kartsaklis and Sadrzadeh transitive sentence similarity dataset, EMNP 2013. This dataset extends the verb-object part of the Mitchell and Lapata (2010) dataset by the introduction of appropriate subject nouns. This version uses the original human judgements from the M&L 2010 dataset.

- Kartsaklis and Sadrzadeh transitive sentence similarity dataset, QPL 2014. This is the same dataset as the one used in the EMNLP 2013 paper, but with re-evaluated human scores collected from Amazon Turk.

Emeritus Faculty

Past Members

Selected Publications

-

Investigating the Role or Prior Disambiguation in Deep−Learning Compositional Models of Meaning

Jianpeng Cheng‚ Dimitri Kartsaklis and Edward Grefenstette

In Learning Semantics workshop‚ NIPS 2014. Montreal‚ Canada. December, 2014.

Details about Investigating the Role or Prior Disambiguation in Deep−Learning Compositional Models of Meaning | BibTeX data for Investigating the Role or Prior Disambiguation in Deep−Learning Compositional Models of Meaning | Download (pdf) of Investigating the Role or Prior Disambiguation in Deep−Learning Compositional Models of Meaning

-

Evaluating Neural Word Representations in Tensor−Based Compositional Settings

Dmitrijs Milajevs‚ Dimitri Kartsaklis‚ Mehrnoosh Sadrzadeh and Matthew Purver

In Proceedings of the 2014 Conference on Empirical Methods in Natural Language Processing (EMNLP). Doha‚ Qatar. October, 2014. Association for Computational Linguistics.

Details about Evaluating Neural Word Representations in Tensor−Based Compositional Settings | BibTeX data for Evaluating Neural Word Representations in Tensor−Based Compositional Settings | Download (pdf) of Evaluating Neural Word Representations in Tensor−Based Compositional Settings | Download (pdf) of Evaluating Neural Word Representations in Tensor−Based Compositional Settings

-

A Study of Entanglement in a Categorical Framework of Natural Language

Dimitri Kartsaklis and Mehrnoosh Sadrzadeh

In Proceedings of the 11th Workshop on Quantum Physics and Logic (QPL). Kyoto‚ Japan. June, 2014.

Details about A Study of Entanglement in a Categorical Framework of Natural Language | BibTeX data for A Study of Entanglement in a Categorical Framework of Natural Language | Download (pdf) of A Study of Entanglement in a Categorical Framework of Natural Language